Welcome to This PySpark Tutorial:

This is the base tutorial page of the PySpark from where you can explore all about the PySpark.PySpark is a popular interface for accessing the Apache Spark features with the Python Programming Language. So, If you come from a core Python background and you want to make your career in Big Data, Data Science, or Data Engineering then definitely you can start to learn PySpark.

Before knowing PySpark Let’s understand the difference between PySpark and Spark.

Headings of Contents

What is Apache Spark?

Apache Spark is an open-source popular big data processing framework. Apache Spark is written in Scala programming language. Apache Spark is most used in Data engineering, data science, and machine learning on single or clusters.

Key features of Apache Spark:

- Batch/Streamming Data:- We can perform batch processing or streaming processing. The difference between batch processing and streamming processing is that In batch processing data comes to perform processing periodically but in streamming processing data comes continuously to perform processing.

- We can use our preferred language to process that data.

- SQL Analytics:- Apache Spark also allows to perform SQL queries to get the reporting for dashboarding.

- Machine learning:- Spark provides an MLlib module to perform machine learning operations.

Let’s move on to the PySpark.

What is PySpark?

PySpark is nothing but it is an interface written in Python programming just like another package to interact with Apache Spark. Using PySpark APIs our application can use all the functionalities of Apache Spark to process large-scale datasets and perform operations on top of loaded datasets.

Who Can Learn PySpark?

If you come from a Python background then you can go with PySpark because it is just an interface completely written in Python programming Language.

Here We have listed all the tutorials related to PySpark that will be helpful in your PySpark journey.

PySpark Tutorial Library

- PySpark DataFrame Tutorial for Beginners

- How to Count Null and NaN Values in Each Column in PySpark DataFrame?

- Merge Two DataFrames in PySpark with Same Column Names

- How to Apply groupBy in Pyspark DataFrame

- Merge Two DataFrames in PySpark with Different Column Names

- How to Change DataType of Column in PySpark DataFrame

- Drop One or Multiple columns from PySpark DataFrame

- How to Convert PySpark DataFrame to JSON ( 3 Ways )

- How to Write PySpark DataFrame to CSV

- How to Convert PySpark DataFrame Column to List

- How to convert PySpark DataFrame to RDD

- How to convert PySpark Row To Dictionary

- PySpark Column Class with Examples

- PySpark Sort Function with Examples

- PySpark col() Function with Examples

- How to read CSV files using PySpark

- How to Format a String in PySpark DataFrame using Column Values

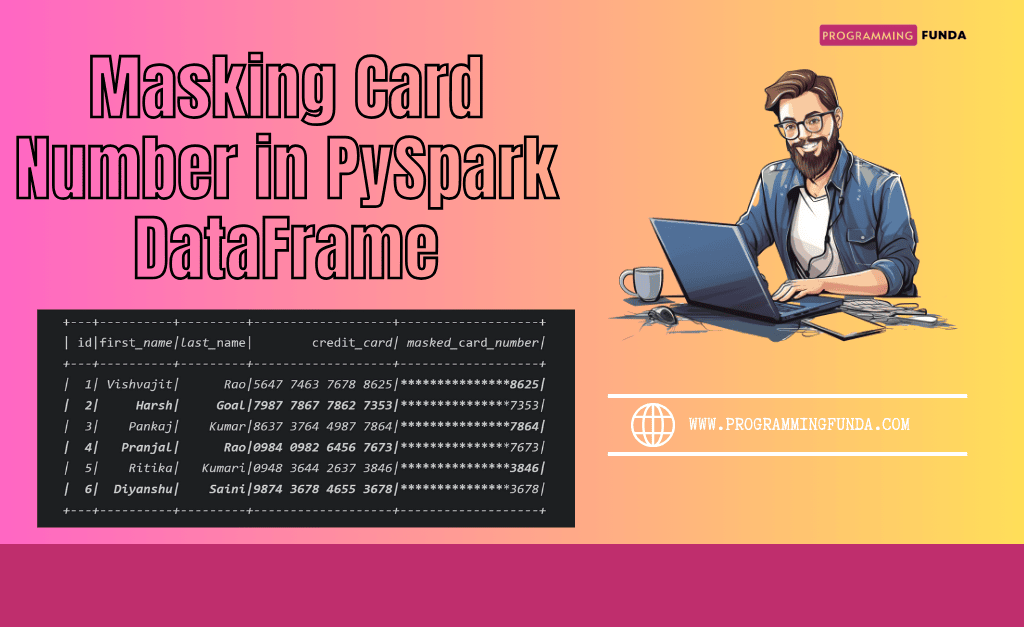

- How to Mask Card Number in PySpark DataFrame

- How to Remove Time Part from PySpark DateTime Column

- How to Explode Multiple Columns in PySpark DataFrame

- PySpark Normal Built-in Functions

- PySpark SQL DateTime Functions with Examples

- PySpark SQL String Functions with Examples